Hebbian Learning Meets Hopfield Networks: The Architecture That Was Always Smarter Than Transformers

For the past decade, transformers and their attention mechanisms have dominated the AI landscape. From GPT to BERT to Vision Transformers, the "attention is all you need" mantra reshaped how we think about machine learning. Translator systems, encoder-decoder pipelines that convert data between representations became the backbone of everything from language processing to image generation. They were revolutionary. They were powerful.

And now, they are hitting a wall.

The irony is that the solution has existed since 1982. That year, John Hopfield published a paper in the Proceedings of the National Academy of Sciences that introduced a recurrent neural network with a property transformers have never truly achieved: guaranteed convergence to stable states through energy minimization. The Hopfield network didn't just learn patterns it stored them as physical attractors in an energy landscape, retrievable even from noisy or incomplete inputs.

The AI community is only now beginning to internalize what the physics and neuroscience communities have known for decades: the energy-based, associative approach to neural computation isn't a historical curiosity it's a foundational paradigm that the transformer era merely built upon without fully understanding.

The Transformer Ceiling

Transformers work by computing relationships between every element in a sequence simultaneously through self-attention. This is brilliant for language. It's brilliant for structured data with clear sequential patterns. But the real world the world of robotics, embedded circuits, sensor-driven decision-making, and edge computing doesn't organize itself into neat sequences waiting to be attended to.

The transformer architecture assumes abundance: abundant compute, abundant memory, abundant power. Self-attention scales quadratically with sequence length. Feed-forward layers grow linearly but massively. When you deploy a transformer-based system on a mobile robot navigating a warehouse, or embed it into an electronic circuit controlling a manufacturing arm, those assumptions collapse.

The translator paradigm carries its own limitation: it treats intelligence as primarily a conversion problem between fixed representations. Encode the input. Decode the output. But real intelligence doesn't just translate it remembers, it associates, and it converges on stable interpretations even when the input is degraded or ambiguous.

We've been patching these problems with quantization, distillation, pruning, and a hundred other optimization tricks. Edge AI surveys in 2025 confirm that deploying deep learning on embedded hardware remains "constrained by strict limitations in memory, computation and energy." But optimization of a fundamentally mismatched architecture only delays the reckoning. What we need is an architecture that treats memory, stability, and energy efficiency as first principles not afterthoughts.

Enter the Hopfield Neural Network

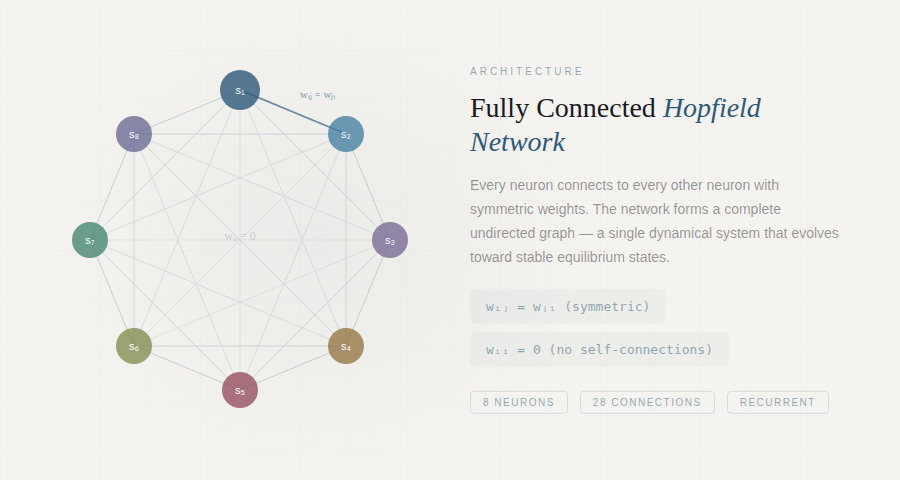

A Hopfield network is a recurrent neural network consisting of a single layer of neurons, where each neuron is connected to every other neuron except itself. The network forms a complete undirected graph every pair of neurons shares a symmetric connection weight, meaning the strength from neuron i to neuron j is identical to the strength from j to i. There are no self-connections (wᵢᵢ = 0). No layered hierarchy. No sequential processing pipeline. Instead, the entire network functions as a single, interconnected dynamical system that evolves over time toward stable equilibrium states.

A Hopfield network with fully connected neurons. Every node connects to every other node with symmetric weights (wᵢⱼ = wⱼᵢ), forming a complete undirected graph. No self-connections exist.

The elegance of this architecture lies in its energy function. Hopfield proved that his network possesses an associated Lyapunov energy function:

E = −½ Σᵢ Σⱼ wᵢⱼ sᵢ sⱼ − Σᵢ θᵢ sᵢ

This energy is guaranteed to decrease (or remain constant) with every neuron update. The network always converges it never oscillates endlessly, never diverges, never gets trapped in infinite computation loops. It settles. Bruck proved in 1990 that this settling behavior connects to cuts in the associated graph the network essentially performs a greedy algorithm for the max-cut problem at every update step.

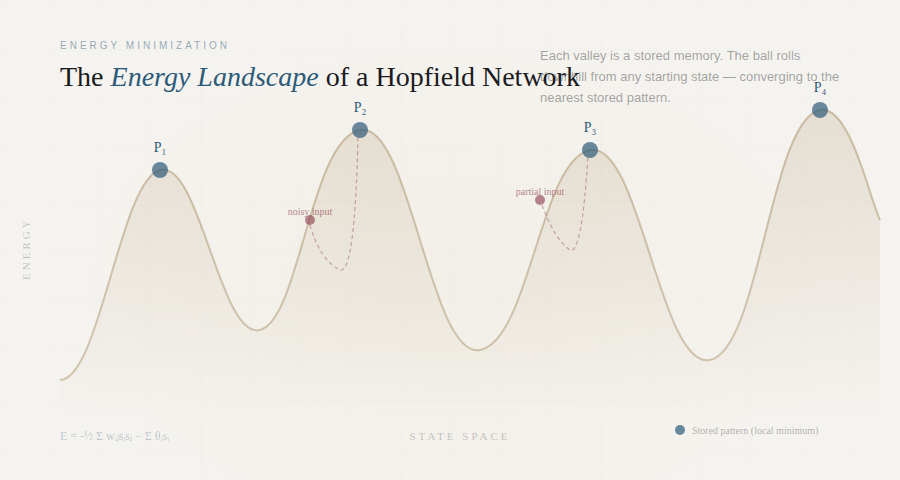

And where the network settles is where the intelligence lives. Stored patterns correspond to local minima of the energy function the valleys in the energy landscape. When you present the network with a corrupted, noisy, or incomplete version of a stored pattern, the dynamics roll the network state downhill until it falls into the nearest valley: the stored pattern that best matches the input.

The energy landscape of a Hopfield network. Each valley (local minimum) corresponds to a stored memory pattern. Starting from any initial state, the network dynamics roll "downhill" until settling into the nearest attractor recovering the complete pattern from partial or noisy input.

This is content-addressable associative memory the ability to recall complete memories from partial cues, something biological brains do effortlessly but transformers can only approximate through expensive computation.

The connection to physics runs deep. The Hopfield network is mathematically equivalent to an Ising model the same framework used to describe magnetism in statistical mechanics. The Sherrington-Kirkpatrick model of spin glass, published in 1975, is essentially a Hopfield network with random weights. Hopfield's insight was to exploit this property deliberately, engineering the energy landscape so that each minimum corresponds to a useful stored pattern.

Hebbian Learning: Neurons That Fire Together Wire Together

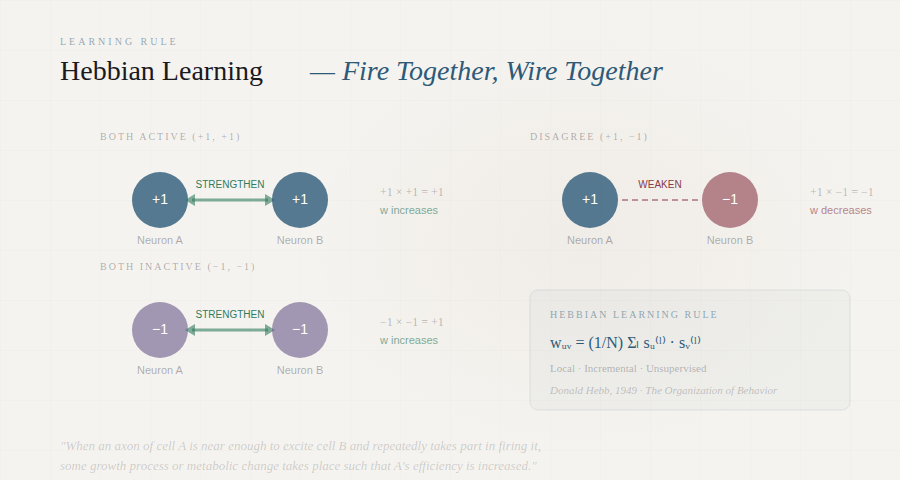

The learning rule that makes Hopfield networks work has roots that go back even further to Donald Hebb's 1949 book The Organization of Behavior, which proposed what is now considered the founding principle of neural learning theory.

Hebb's insight was deceptively simple: when a presynaptic cell repeatedly and persistently takes part in firing a postsynaptic cell, the synaptic connection between them strengthens. "Neurons that fire together, wire together" though Hebb himself was more precise, emphasizing that cell A needs to take part in causing cell B to fire, not merely fire at the same time. This temporal causality foreshadowed what is now known as spike-timing-dependent plasticity (STDP), confirmed experimentally decades later, including Eric Kandel's groundbreaking research on the sea slug Aplysia californica.

Hebbian learning: when two connected neurons are repeatedly active together, the synapse between them strengthens. This principle "neurons that fire together wire together" is the biological foundation of how Hopfield networks store patterns.

In the Hopfield network, Hebbian learning is implemented as:

wᵤᵥ = (1/N) Σₗ sᵤ(l) · sᵥ(l) for all stored patterns l, where u ≠ v

When two neurons have the same state in a given pattern (both +1 or both −1), their product is positive, strengthening the excitatory connection. When they disagree, the product is negative, creating an inhibitory connection. The cumulative effect sculpts an energy landscape with a valley at each stored pattern.

The Hebbian rule is local (each weight update uses only information available to the two connected neurons) and incremental (new patterns can be stored without re-processing old ones). Remarkably, Hebbian learning can be shown mathematically to perform unsupervised principal component analysis (PCA) of the input data the network naturally extracts the most statistically significant features from its environment.

In 1997, Amos Storkey introduced an improved learning rule that takes into account the local field at each neuron, achieving greater storage capacity than standard Hebbian learning. This demonstrates that even within the same architecture, the choice of learning algorithm matters deeply a principle that resonates with the broader lesson of neural network research.

The Breakthrough: "Hopfield Networks is All You Need"

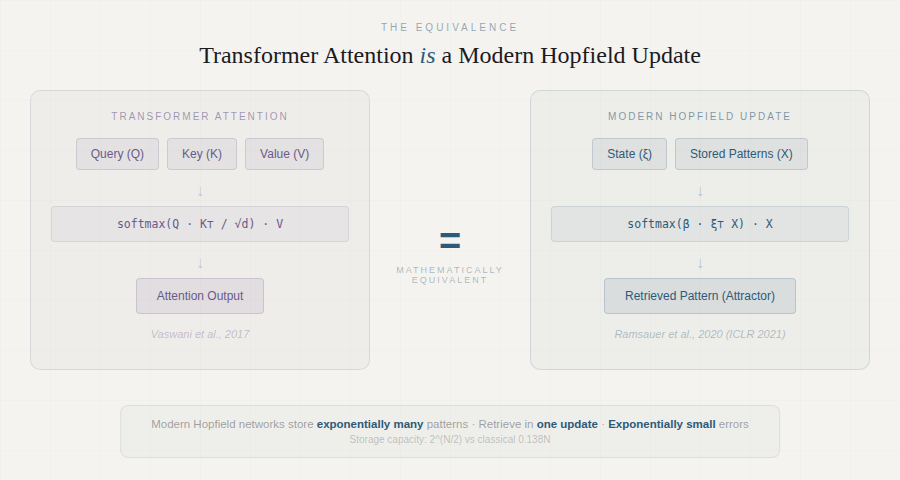

In 2020, a team led by Hubert Ramsauer at the Johannes Kepler University Linz published a paper with a provocatively titled echo of the transformer's origin story: "Hopfield Networks is All You Need." Their contribution was the mathematical proof of what many had suspected: the self-attention mechanism in transformers is exactly the update rule of a modern continuous Hopfield network.

The connection between modern Hopfield networks and transformer attention. The softmax-based attention mechanism in transformers is mathematically equivalent to one update step of a continuous Hopfield network with exponential energy function. Image from Ramsauer et al., "Hopfield Networks is All You Need."

This wasn't merely an analogy. The team showed that by generalizing the classical Hopfield energy function to continuous states and using an exponential interaction term, the resulting update rule becomes:

Z = softmax(β · R Wq Wk⊤ Y⊤) Y Wk Wv

which is precisely the transformer attention formula. The modern Hopfield network can store exponentially many patterns (with the dimension of the associative space), retrieves them in a single update, and has exponentially small retrieval errors.

The paper identified three types of energy minima in this modern framework: (1) a global fixed point that averages over all stored patterns, (2) metastable states that average over subsets of patterns, and (3) fixed points that store single patterns. Transformer heads in lower layers tend to operate in the global averaging regime, while higher-layer heads use metastable states to collect and process information a finding that offers deep insight into why transformers work the way they do.

The practical implication was immediate: the team released Hopfield layers as drop-in PyTorch modules that could replace pooling layers, GRU/LSTM layers, and standard attention layers. In benchmarks, these Hopfield layers achieved state-of-the-art results on immune repertoire classification (with several hundred thousand instances per sample), multiple instance learning problems, and UCI classification benchmarks where traditional deep learning methods typically struggle.

By December 2025, a NeurIPS paper by Masumura and Taki went further, showing that going beyond the "adiabatic approximation" reveals hidden states in the Hopfield-transformer correspondence that can solve the rank collapse and token uniformity problems that plague deep transformers improving accuracy without adding any training parameters.

The Growing Consensus: Who Else Is Saying This?

This argument that Hopfield networks represent the next paradigm beyond transformers is not speculation. It is being actively pursued by major research institutions, and the evidence is accumulating fast. Here are the key voices and papers converging on the same conclusion.

IBM Research and Dmitry Krotov

In January 2025, IBM Research published an extensive interview with Dmitry Krotov, Hopfield's long-time collaborator and one of the architects of Dense Associative Memory. Krotov made the case directly: since Hopfield networks mirror the brain's recurrent feedback loops unlike the feedforward architecture of 90% of current AI models they represent "a promising alternative to today's feedforward networks." He pointed out that transformers process information in one direction only, and as context windows grow longer, computational complexity rapidly increases. The brain, by contrast, uses recurrent feedback loops to summarize and store past information in memory. Hopfield networks work the same way.

Krotov also described the Energy Transformer (NeurIPS 2023), a collaboration between IBM Research and Georgia Tech that doesn't just layer Hopfield insights onto existing transformers it replaces the entire sequence of feedforward transformer blocks with a single large Associative Memory model. The attention mechanism in the Energy Transformer is explicitly different from conventional attention: it is derived from first principles of energy minimization, not patched onto a feedforward pipeline.

🔗 IBM Research "Searching for brain-inspired AI algorithms" (Jan 2025)

🔗 Energy Transformer arXiv:2302.07253 (NeurIPS 2023)

Johannes Kepler University - Hochreiter's Group

Sepp Hochreiter, co-inventor of the LSTM, led the team that published "Hopfield Networks is All You Need." Their Hopfield layers repository provides production-ready PyTorch modules that serve as drop-in replacements for transformer attention layers, pooling layers, and recurrent layers. The companion blog post walks through the mathematical derivation showing that the softmax attention formula is a special case of the modern Hopfield update rule and that Hopfield layers offer additional functionalities that standard attention cannot provide, including static learned prototypes and flexible associative memory configurations.

🔗 Hopfield Networks is All You Need - ICLR 2021

NeurIPS 2025: Hopfield Attention Improves GPT and Vision Transformers

The most recent evidence comes from Masumura and Taki's December 2025 NeurIPS paper. They showed that Modern Hopfield Attention (MHA) which adds hidden states derived from the full (non-adiabatic) Hopfield dynamics systematically improves both GPT-2 and LLaMA language models and Vision Transformers. The improvements came with zero additional training parameters. More importantly, MHA was found to solve the rank collapse problem (where attention matrices in deep transformers degenerate, causing all tokens to converge to similar representations) a fundamental pathology that has plagued transformer scaling efforts. The authors concluded: "We hope that this research will open new possibilities for the systematic design of Transformer architectures using Hopfield networks."

🔗 On the Role of Hidden States of Modern Hopfield Network in Transformer arXiv:2511.20698 (NeurIPS 2025)

Nature Communications: Biologically Plausible Online Learning

In May 2024, Nature Communications published work on the Sparse Quantized Hopfield Network a model that learns using local learning rules in online-continual scenarios, exactly the way biological brains do. This addresses a fundamental limitation of transformers, which require non-local backpropagation and offline training on curated datasets. The paper positions Hopfield-based architectures as the natural bridge between artificial neural networks and neuromorphic computing hardware.

🔗 A Sparse Quantized Hopfield Network for Online-Continual Memory Nature Communications (2024)

Outlier-Efficient Hopfield Layers for Large Foundation Models

A 2024 paper tackled a practical problem in large transformer models: the tendency to allocate attention to uninformative tokens (delimiters, punctuation marks) the "no-op outlier" problem. Their solution was an outlier-efficient Hopfield energy function that classifies these tokens and routes them to a zero-energy point, preventing them from diluting useful attention. The resulting model subsumes Softmax₁ attention as a special case and was validated on BERT, OPT, and Vision Transformers. This is Hopfield theory solving real-world transformer engineering problems.

🔗 Outlier-Efficient Hopfield Layers for Large Transformer-Based Models (2024)

Transformers as Energy Minimizers (January 2026)

The most recent theoretical work, published in January 2026, frames the entire transformer forward pass as intrinsic energy minimization fully adopting the Hopfield perspective as the explanatory framework for why transformers work at all. The paper connects sparse modern Hopfield models to the full family of attention variants (softmax, sparsemax, α-entmax), showing they are all special cases of energy-based retrieval dynamics.

🔗 Transformers as Intrinsic Optimizers: Forward Inference through the Energy Principle (Jan 2026)

Review Papers and Community Resources

The broader research community is organizing around this convergence:

- "Energy-Based Learning and the Evolution of Hopfield Networks" (TechRxiv, April 2025) A comprehensive review tracing the full arc from Hopfield (1982) through Boltzmann Machines to the reinterpretation of transformer attention as modern Hopfield dynamics. → Read the paper

- "An Energy-Based Perspective on Attention Mechanisms in Transformers" An in-depth technical blog arguing that understanding transformers through the Hopfield energy lens "might lead to qualitatively different improvements beyond what is possible by relying on scaling and reducing computational complexity alone." → Read the blog

- Awesome Modern Hopfield Networks A curated GitHub repository tracking 50+ papers on modern Hopfield networks across domains: asset allocation, change detection, creative thought reconstruction, out-of-distribution detection, immune repertoire classification, and more. → Browse the collection

- "Is Hopfield Networks All You Need?" (Analytics India Magazine, Dec 2024) Practitioner-oriented coverage of how Hochreiter's team showed Hopfield networks are "interchangeable with state-of-the-art transformer models." → Read the article

- "New Research in Hopfield Networks: A Short Intro" (Medium, July 2024) Accessible overview noting that storage capacity has grown from 0.138N to 2^(N/2) through exponential activation functions. → Read on Medium

The trajectory is clear. This is not one lab's proposal or one paper's speculation. IBM Research, Johannes Kepler University, MIT, NeurIPS, Nature Communications, and the broader machine learning community are converging on the same conclusion from different directions: the energy-based, associative memory paradigm that Hopfield established is not just the foundation transformers were unknowingly built on it is the framework that will carry AI beyond what transformers alone can achieve.

Why This Matters Now: The Case for Switching

Guaranteed Convergence for Safety-Critical Systems

Transformers produce outputs, but there is no mathematical guarantee of stability. Hopfield networks converge to stable states by proof the energy function decreases monotonically. For robotics, autonomous vehicles, medical devices, and any system where reliability is non-negotiable, this is a requirement that transformers cannot provide.

Associative Memory as the Core Capability

If you train a Hopfield network so that state (1, −1, 1, −1, 1) is an energy minimum, and present the corrupted input (1, −1, −1, −1, 1), the network converges to the correct stored pattern. It doesn't guess or interpolate it rolls downhill in the energy landscape to the nearest attractor. For robotics with occluded sensors, embedded systems with noisy data, and any application requiring pattern completion from partial input, this is transformative.

A Universal Optimization Engine

Hopfield and David Tank demonstrated in 1985 that Hopfield networks can solve the traveling salesman problem. If a cost function can be written in the form of the Hopfield energy, then the network's equilibrium points are the solutions. Since then, the architecture has been applied to job-shop scheduling, channel allocation in wireless networks, image restoration, analog-to-digital conversion, mobile routing, and combinatorial optimization. A 2024 Nature Communications paper introduced a Sparse Quantized Hopfield Network for online-continual memory learning with local rules in the same way biological brains do, a capability transformers fundamentally lack.

Hardware That Thinks Without Software

The symmetric weight constraint (wᵢⱼ = wⱼᵢ) maps directly onto analog electronic circuits a resistive connection is inherently symmetric. Hopfield networks can be fabricated as ASICs or FPGAs with inference at nanosecond timescales. Nature's 2025 collection on neuromorphic computing confirms this direction: energy-efficient analog hardware implementing neural dynamics at the edge, with content-addressable memory that needs no GPU, no CPU, and no software stack.

Neuromorphic and analog AI chips implementing neural network dynamics directly in hardware. The symmetric, energy-based structure of Hopfield networks maps naturally onto resistive circuits, enabling AI inference at nanosecond timescales without traditional software stacks.

Binary and Continuous Flexibility

Classical Hopfield networks use binary neurons (+1 or −1); continuous variants use sigmoidal activation and differential equations. Updates can be asynchronous (one neuron at a time, guaranteeing convergence) or synchronous (all at once, enabling parallelism). The same architecture adapts from a microcontroller with kilobytes of RAM to a massively parallel FPGA and now, with modern Hopfield layers, to GPU-accelerated deep learning pipelines.

Storage Capacity: The Honest Constraint

The classical limitation is clear: a network of N neurons reliably stores approximately 0.138N patterns before spurious states degrade retrieval. This is a real constraint but it's an honest one. Transformers don't have a clean mathematical bound on when they'll start hallucinating.

Moreover, modern Hopfield networks (Krotov & Hopfield, 2016; Demircigil et al., 2017) achieve exponential storage capacity through higher-order interaction terms. Research continues to expand these boundaries: sparse and structured Hopfield networks, long-sequence Hopfield memory, and Hopfield networks for asset allocation are all active areas of development, with papers accumulating rapidly.

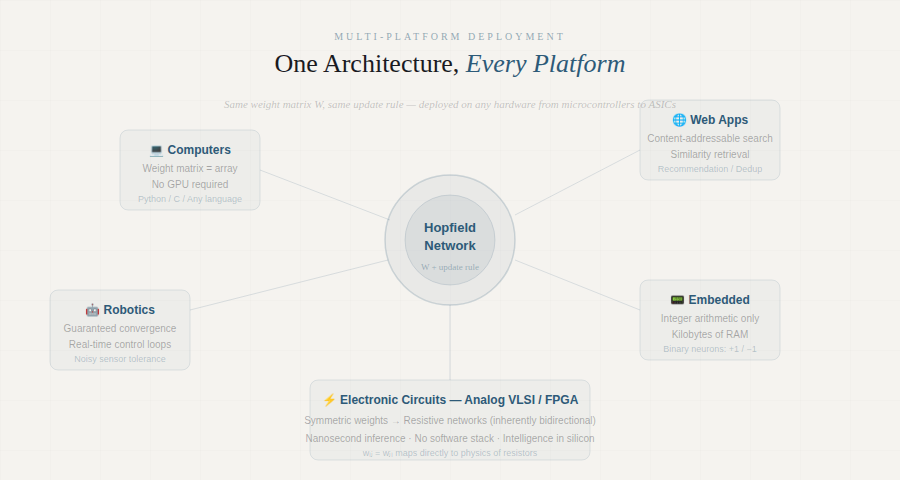

Practical Deployment: From PCs to Circuits

The original vision of early neural network researchers was clear: a framework that could be described once and deployed everywhere. Hopfield's architecture realizes this:

Computer applications A weight matrix and an update rule. No GPU required.

Internet applications Content-addressable memory for similarity search, recommendation, and deduplication.

Robotics Guaranteed convergence for real-time control loops. Motor patterns stored as attractors, recalled even from noisy sensor input.

Embedded systems Binary Hopfield networks run on microcontrollers with integer arithmetic alone.

Electronic circuits Symmetric weights map to resistive networks. Intelligence fabricated directly in silicon.

The Path Forward

The transformer era gave us extraordinary language machines. But the frontier is expanding into the physical world robots, sensors, actuators, and real-time decisions. That frontier demands architectures that are stable, efficient, interpretable, and deployable on hardware that actually exists at the edge.

The 2020 proof that transformer attention is a modern Hopfield update rule wasn't just a mathematical curiosity it revealed that we've been using Hopfield networks all along, wrapped in a framework that obscured their true nature. The 2025 NeurIPS work showing that Hopfield hidden states solve transformer pathologies like rank collapse suggests the next step: not patching transformers with Hopfield insights, but building directly on the Hopfield paradigm itself.

John Hopfield showed us the way in 1982. Donald Hebb laid the biological foundations in 1949. The research community is converging on it now.

It's time to switch.

References

- Hopfield, J.J. (1982). "Neural networks and physical systems with emergent collective computational abilities." PNAS, 79(8), 2554–2558.

- Hebb, D.O. (1949). The Organization of Behavior. Wiley.

- Ramsauer, H. et al. (2020). "Hopfield Networks is All You Need." ICLR 2021. arXiv:2008.02217

- Krotov, D. & Hopfield, J.J. (2016). "Dense Associative Memory for Pattern Recognition." NeurIPS.

- Storkey, A. (1997). "Increasing the capacity of a Hopfield network without sacrificing functionality." ICANN.

- Hoover, B., Krotov, D. et al. (2023). "Energy Transformer." NeurIPS 2023. arXiv:2302.07253

- Masumura, T. & Taki, M. (2025). "On the Role of Hidden States of Modern Hopfield Network in Transformer." NeurIPS 2025. arXiv:2511.20698

- Alonso, N. & Krichmar, J. (2024). "A sparse quantized Hopfield network for online-continual memory." Nature Communications, 15, 3722. nature.com

- Hu, J.Y. et al. (2024). "Outlier-Efficient Hopfield Layers for Large Transformer-Based Models." arXiv:2404.03828

- Hu, J.Y. et al. (2026). "Transformers as Intrinsic Optimizers: Forward Inference through the Energy Principle." arXiv:2511.00907

- Hopfield, J.J. & Tank, D.W. (1985). "'Neural' Computation of Decisions in Optimization Problems." Biological Cybernetics, 52, 141–152.

- Bruck, J. (1990). "On the convergence properties of the Hopfield model." Proceedings of the IEEE.

- Krotov, D. (2025). "Searching for brain-inspired AI algorithms." IBM Research Blog. research.ibm.com

- "Energy-Based Learning and the Evolution of Hopfield Networks." TechRxiv, April 2025. techrxiv.org